All Categories

Featured

Table of Contents

- – The Shift from Traditional Indexing to Intelli...

- – Infrastructure Durability for Large Scale Oper...

- – Material Intelligence and Semantic Mapping Met...

- – Technical Requirements for AI Browse Optimizat...

- – Scaling Localized Exposure in San Francisco an...

- – The Future of Enterprise Technical Audits

The Shift from Traditional Indexing to Intelligent Retrieval in 2026

Big business sites now deal with a reality where standard search engine indexing is no longer the last goal. In 2026, the focus has shifted towards smart retrieval-- the procedure where AI designs and generative engines do not just crawl a site, but attempt to comprehend the underlying intent and accurate precision of every page. For organizations operating throughout San Francisco or metropolitan areas, a technical audit should now account for how these enormous datasets are translated by large language designs (LLMs) and Generative Experience Optimization (GEO) systems.

Technical SEO audits for enterprise sites with countless URLs need more than just inspecting status codes. The large volume of information necessitates a focus on entity-first structures. Online search engine now focus on websites that plainly define the relationships between their services, areas, and personnel. Many companies now invest heavily in Content Marketing to ensure that their digital properties are properly categorized within the worldwide knowledge graph. This includes moving beyond easy keyword matching and checking out semantic importance and information density.

Infrastructure Durability for Large Scale Operations in CA

Preserving a site with hundreds of thousands of active pages in San Francisco needs a facilities that focuses on render effectiveness over basic crawl frequency. In 2026, the idea of a crawl budget has developed into a calculation budget plan. Online search engine are more selective about which pages they invest resources on to render fully. If a website's JavaScript execution is too resource-heavy or its server response time lags, the AI representatives accountable for information extraction may merely skip large sections of the directory.

Auditing these sites involves a deep examination of edge delivery networks and server-side making (SSR) setups. High-performance enterprises typically find that localized material for San Francisco or specific territories needs distinct technical managing to maintain speed. More companies are turning to Effective Content Marketing Frameworks for growth because it resolves these low-level technical bottlenecks that prevent material from appearing in AI-generated responses. A hold-up of even a few hundred milliseconds can result in a significant drop in how typically a website is utilized as a main source for search engine responses.

Material Intelligence and Semantic Mapping Methods

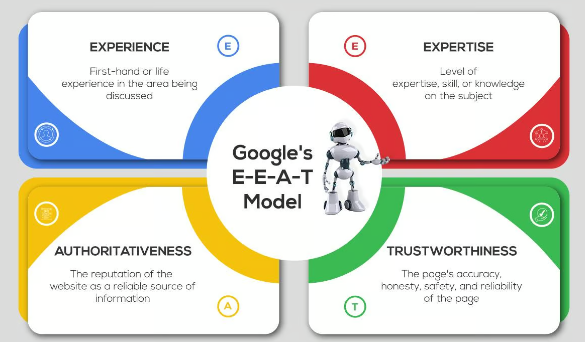

Content intelligence has become the foundation of contemporary auditing. It is no longer enough to have high-quality writing. The info must be structured so that online search engine can validate its truthfulness. Industry leaders like Steve Morris have actually mentioned that AI search presence depends on how well a website supplies "verifiable nodes" of info. This is where platforms like RankOS come into play, offering a way to take a look at how a site's data is perceived by numerous search algorithms at the same time. The objective is to close the gap between what a company supplies and what the AI predicts a user needs.

Auditors now utilize content intelligence to map out semantic clusters. These clusters group associated topics together, making sure that an enterprise site has "topical authority" in a specific niche. For a company offering professional solutions in San Francisco, this means making sure that every page about a specific service links to supporting research study, case research studies, and local information. This internal linking structure acts as a map for AI, guiding it through the website's hierarchy and making the relationship between various pages clear.

Technical Requirements for AI Browse Optimization (AEO/GEO)

As online search engine transition into responding to engines, technical audits needs to examine a site's preparedness for AI Browse Optimization. This consists of the application of sophisticated Schema.org vocabularies that were as soon as thought about optional. In 2026, particular residential or commercial properties like discusses, about, and knowsAbout are utilized to indicate expertise to browse bots. For a site localized for CA, these markers help the search engine understand that business is a genuine authority within San Francisco.

Information precision is another important metric. Generative search engines are programmed to avoid "hallucinations" or spreading out misinformation. If an enterprise site has clashing info-- such as different costs or service descriptions across various pages-- it runs the risk of being deprioritized. A technical audit must include a factual consistency check, typically carried out by AI-driven scrapers that cross-reference data points throughout the entire domain. Organizations progressively rely on SEO Services for Businesses to stay competitive in an environment where accurate accuracy is a ranking factor.

Scaling Localized Exposure in San Francisco and Beyond

Business websites frequently struggle with local-global stress. They need to keep a unified brand name while appearing relevant in specific markets like San Francisco] The technical audit needs to confirm that local landing pages are not just copies of each other with the city name switched out. Instead, they need to contain distinct, localized semantic entities-- specific neighborhood discusses, regional partnerships, and regional service variations.

Managing this at scale needs an automated technique to technical health. Automated monitoring tools now notify groups when localized pages lose their semantic connection to the primary brand name or when technical mistakes take place on specific local subdomains. This is especially important for companies running in varied locations across CA, where regional search behavior can vary considerably. The audit guarantees that the technical structure supports these regional variations without producing duplicate content issues or confusing the search engine's understanding of the site's main objective.

The Future of Enterprise Technical Audits

Looking ahead, the nature of technical SEO will continue to lean into the crossway of data science and traditional web advancement. The audit of 2026 is a live, ongoing procedure rather than a static file produced once a year. It involves constant monitoring of API combinations, headless CMS performance, and the way AI search engines summarize the website's material. Steve Morris often highlights that the business that win are those that treat their site like a structured database instead of a collection of documents.

For an enterprise to prosper, its technical stack need to be fluid. It ought to be able to adapt to brand-new search engine requirements, such as the emerging standards for AI-generated material labeling and information provenance. As search ends up being more conversational and intent-driven, the technical audit stays the most reliable tool for guaranteeing that an organization's voice is not lost in the sound of the digital age. By focusing on semantic clearness and infrastructure performance, large-scale websites can maintain their supremacy in San Francisco and the wider international market.

Success in this era needs a move far from shallow fixes. Modern technical audits take a look at the very core of how data is served. Whether it is enhancing for the current AI retrieval models or ensuring that a site stays available to traditional crawlers, the fundamentals of speed, clarity, and structure remain the assisting principles. As we move even more into 2026, the ability to handle these aspects at scale will specify the leaders of the digital economy.

Table of Contents

- – The Shift from Traditional Indexing to Intelli...

- – Infrastructure Durability for Large Scale Oper...

- – Material Intelligence and Semantic Mapping Met...

- – Technical Requirements for AI Browse Optimizat...

- – Scaling Localized Exposure in San Francisco an...

- – The Future of Enterprise Technical Audits

Latest Posts

How AEO Is Redefining PR Success

How SEO Influences Brand PR and ROI

Crafting High-Impact Media Pitches That Win Results

More

Latest Posts

How AEO Is Redefining PR Success

How SEO Influences Brand PR and ROI

Crafting High-Impact Media Pitches That Win Results